The first paper in our AI Transformation Series explains why the way software gets built can determine how well hospitals are protected from revenue risk in a constantly changing healthcare landscape.

Most healthcare technology companies treat AI as a feature; it’s just another capability bolted onto existing systems.

At EnableComp, we made a different choice. We rebuilt our entire software development lifecycle (SDLC) around AI from the ground up. The results are now in production, measured, and documented in the first paper of our AI Transformation Series – The Blueprint: Managing an Enterprise AI-Driven Development Lifecycle ».

We're starting here because development velocity is where the whole story begins and because the speed at which we can respond to change is the speed at which your revenue cycle stops bleeding.

There is a meaningful difference between adding AI to a system and rebuilding the system around AI.

Adding AI as a feature delivers a linear gain. One workflow gets faster; one step gets cheaper. While that’s useful, it doesn't change the underlying equation.

When you rebuild your system around AI, the operating model integrates intelligence, automation, and domain expertise across the full lifecycle. As the underlying models improve, the entire system improves with them. When domain experts learn something new, that knowledge extends to every workflow that touches it.

Most RCM vendors add AI tools to their existing systems, leaving the underlying architecture intact. We rebuilt the architecture itself. That distinction determines whether the technology behind your revenue cycle delivers incremental improvement or compounding returns.

Our engineering organization does not consist of developers using copilots; humans – experts in their fields – orchestrate teams of specialized AI agents across the development pipeline.

Every agent operates within guardrails and review checkpoints and has a defined role, such as:

Human engineers design, govern, and validate the system at every stage.

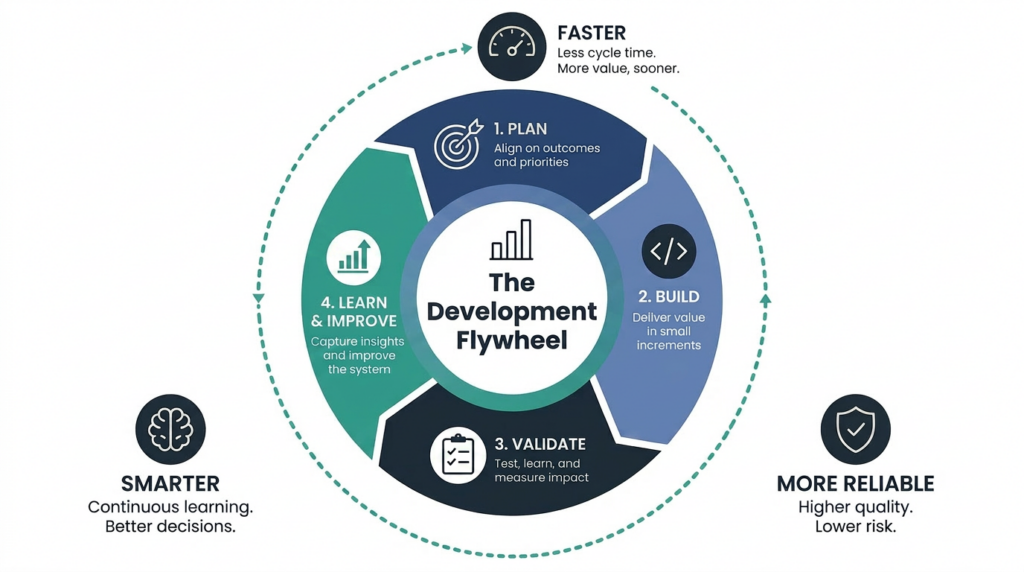

Every sprint produces two outputs. The first is the software that goes into production, and the second is a measurable improvement to the system that built it.

That second output is the flywheel. Our development system gets faster, smarter, and more reliable with every cycle. Organizations that start this work now compound that advantage every quarter. Those that wait will be trying to replicate years of learning that can't be shortcut.

Early on, we committed to a discipline we call "measured, not projected." Everything we share about the system comes from production.

These are not marketing numbers. They are the operational reality of how our engineering organization deploys software today.

You'll never log into this software. Most critical infrastructure works that way; you don't interact with the power grid, but you notice immediately when your house goes dark. The infrastructure behind your revenue cycle and the advantage it delivers will show up in your results.

Revenue cycle management today is not the work it was five years ago. Payer logic shifts more often; regulations change more frequently, and the edge cases that determine whether a claim gets paid keep multiplying. The system working your claims must adapt faster than the complexity it faces.

For example, when a state updates its Workers' Comp fee schedule, something must respond. When a new edge case emerges in Out-of-State Medicaid, something must respond. The speed at which EnableComp encodes that change into the system determines how quickly you stop losing money to it. Our operating model compresses that response from quarters to weeks. Problems that used to persist while technology caught up now get addressed before they compound.

Development velocity isn't a technology metric.

It's a measure of how long you're exposed to revenue loss from a moving target.

If you are evaluating RCM technology partners, try this question: When something changes in the payer or regulatory landscape that affects my claims, how fast does your technology respond — and how do you build that response?

The answer will tell you whether their system is keeping pace with the complexity in your revenue cycle or falling behind it. A vendor that built AI features will describe a roadmap. A vendor that built an AI operating model will describe a cycle time.

That is a meaningful difference. One points to intent, the other points to evidence.

This paper establishes the foundation – how we build. The papers that follow will go deeper into specific layers of the stack. Future papers cover:

We published this series because the industry needs more practitioners sharing what actually works. Boastful claims of AI superiority are everywhere; honest “under the hood” technical accounts are rare. If you are building something similar – or evaluating a partner who claims to have – we hope this series helps.